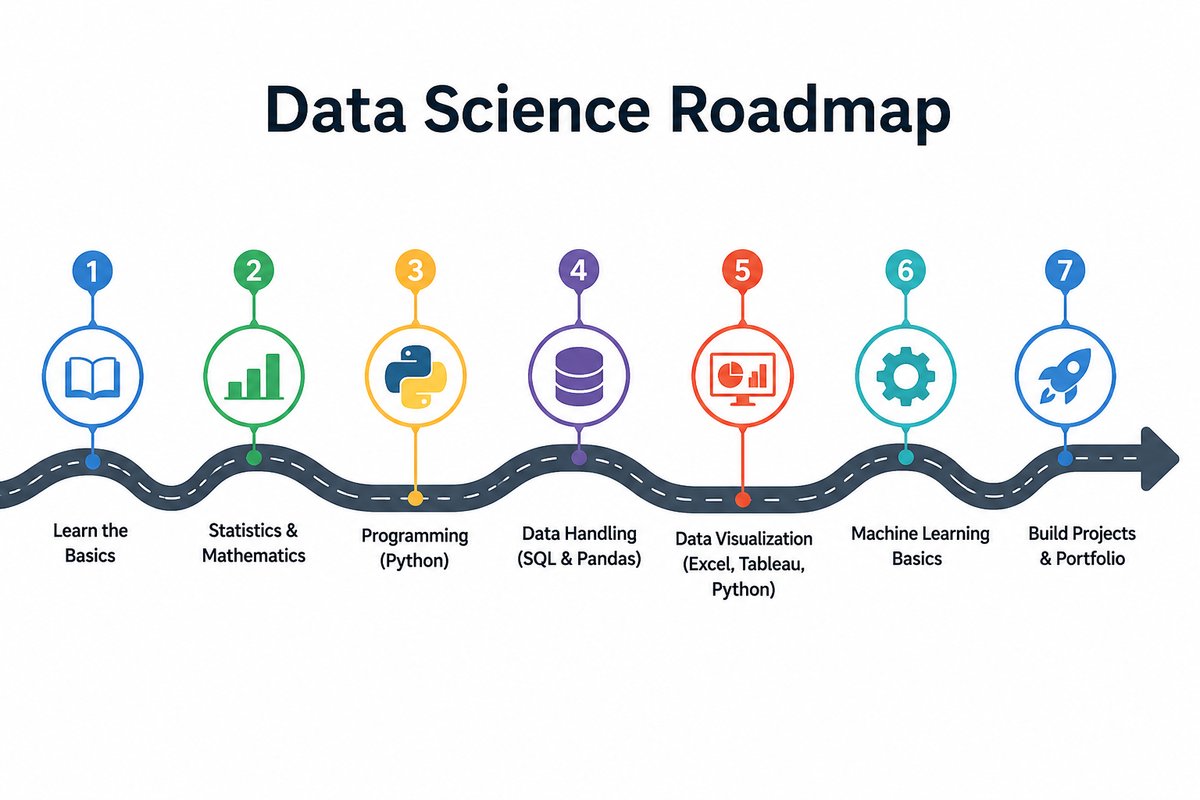

Data Science Roadmap — From print(‘hello’) to Production LLMs

From print('hello') to Production LLMs

A 31-module open-source data-science course you can finish in a weekend or stretch over a month.

Why I built this

Most “learn data science” courses do one of two things badly:

- They lock the good stuff behind a subscription and split it across three separate courses (and never talk to each other).

- Or they jump from

print("hello world")straight to a Kaggle notebook, with no in-between.

This repo is the in-between. 31 deep-dive notebooks, every one runnable in Google Colab in one click, every one paired with a colour-coded explanation document. From your first variable to reading the inference code of a 671-billion-parameter LLM.

Who it’s for

- Beginners who want a structured path that doesn’t skip steps.

- Self-taught coders who can write Python but want to fill the gaps in Pandas, NumPy, scikit-learn — and beyond.

- Career-switchers building a portfolio. The capstone notebook (Module 16) and the production modules (M29-M31) are portfolio-ready as-is.

What’s inside — six parts

Part 1 · Python for Data Science (Modules 1–5)

Variables, data structures, OOP, file I/O, NumPy, Pandas, APIs, web scraping. The alphabet of every later module.

Part 2 · Data Visualization (Modules 6–10)

Matplotlib’s object-oriented API; the seven core chart types; specialised tools (waffle, word cloud, Folium maps); animation and Plotly; building dashboards that tell one cohesive story.

Part 3 · Data Analysis & ML Foundations (Modules 11–16)

The universal workflow: import → wrangle → explore → model → evaluate → communicate. Built around a shared dataset (auto-mpg) so each step builds on the last, then validated end-to-end on California Housing.

Part 4 · Machine Learning & AI (Modules 17–22)

PyTorch fundamentals; the six core model archetypes (Linear, Logistic, K-Means, MLP, CNN, Transformer LM); self-attention from scratch with d_model = 2 so every matrix is hand-checkable; multi-head + causal attention; diffusion models on a 2D toy; time-series forecasting with ARIMA, Prophet, and LSTM.

Part 5 · AI-Research Foundations (Modules 23–25)

The math under every neural network (functions, derivatives, gradients, matrices, probability) plus a deep PyTorch primer. A guided tour of DeepSeek-V3’s actual inference code (RMSNorm, RoPE, Multi-Latent Attention, Mixture-of-Experts). Fine-tuning examples — full fine-tuning, LoRA, QLoRA, and SFT with TRL.

Part 6 · Practitioner Skills (Modules 26–31)

The day-to-day skills a working data scientist or ML engineer uses but most courses skip:

- SQL — JOINs, CTEs, window functions, the SQL ↔︎ Pandas bridge

- Tree-based models — Random Forest, XGBoost, LightGBM, SHAP for interpretation

- A/B testing — proportion z-test, sample-size calc, Bonferroni / BH correction, the peeking trap

- MLOps — FastAPI, Docker, MLflow, drift monitoring with KS + PSI

- RAG & vector search — embeddings, Chroma, hybrid BM25 + vector, reranker, grounded answers

- Prompt engineering & LLM eval — few-shot, chain-of-thought, ReAct, structured outputs, LLM-as-judge

What makes it different

| This course | Typical course | |

|---|---|---|

| Production architecture depth | DeepSeek-V3 dissection | “Transformers exist” |

| Math integrated with code | Yes (Module 23) | usually skipped |

| Practical skills (SQL, A/B, MLOps) | Modules 26-29 | rarely covered |

| Companion docs | Line-by-line, colour-coded, ~30 pages each | None |

| Cost | Free, MIT-licensed | $40-300/month |

How to use it

Option A — Colab. Click any badge in the README, hit Save a copy in Drive, run the cells. Zero install.

Option B — Locally.

git clone https://github.com/kader-xai/data-science-roadmap.git

cd data-science-roadmap

pip install jupyter numpy pandas scikit-learn torch transformers

jupyter notebookWhat you walk away with

After the 31 modules you can:

- Write any Python program and load data from any source.

- Build classical ML models (regression, gradient boosting) AND modern AI models (transformers, diffusion).

- Read production LLM source code (DeepSeek, Llama, Mistral, Qwen).

- Fine-tune any open-weight model on your own data with LoRA.

- Ship a model behind FastAPI + Docker with MLflow tracking.

- Build a working RAG pipeline with vector search.

- A/B-test prompts and evaluate LLMs scientifically.

That’s effectively a 2026 ML-engineer career, built from print('hello').

Repo: github.com/kader-xai/data-science-roadmap Live site: kader-xai.github.io/data-science-roadmap License: MIT

Module index

For anyone scanning to find a specific topic — here’s the full module list with a one-liner each.

Part 1 · Python for Data Science

| # | Module | Topic |

|---|---|---|

| 01 | Python Basics | variables, types, strings, format strings, debugging |

| 02 | Data Structures | lists, tuples, dicts, sets, comprehensions |

| 03 | Programming Fundamentals | conditionals, loops, functions, exceptions, OOP |

| 04 | Working with Data | files, CSV/JSON, NumPy arrays, Pandas DataFrames |

| 05 | APIs & Web Scraping | requests, BeautifulSoup, pd.read_html, yfinance |

Part 2 · Data Visualization

| # | Module | Topic |

|---|---|---|

| 06 | Intro to Visualization | Matplotlib OO API, line plots, styling |

| 07 | Basic Charts | bar, hist, pie, box, scatter, bubble, area |

| 08 | Specialized Viz | waffle, word cloud, regression plot, Folium |

| 09 | Advanced Viz | subplots, time-series patterns, animation, Plotly |

| 10 | Dashboards & Storytelling | composing charts to answer one question |

Part 3 · Data Analysis & ML Foundations

| # | Module | Topic |

|---|---|---|

| 11 | Importing Data | CSV, Excel, JSON, SQL, web; the 5-line inspection ritual |

| 12 | Data Wrangling | missing values, scaling, binning, encoding, outliers |

| 13 | Exploratory Data Analysis | distributions, correlations, group-bys, pivot tables |

| 14 | Model Development | linear / multiple / polynomial regression with Pipelines |

| 15 | Model Evaluation | MSE/RMSE/MAE/R², CV, Ridge & Lasso, GridSearch |

| 16 | Capstone | California Housing end-to-end with Random Forest |

Part 4 · Machine Learning & AI (deeper dive)

PyTorch fundamentals; the six core archetypes (Linear, Logistic, K-Means, MLP, CNN, Transformer LM); self-attention from scratch; multi-head + causal attention; diffusion models on a 2D toy; time-series with ARIMA, Prophet, and LSTM.

Part 5 · AI-Research Foundations

Math foundations integrated with code, a deep PyTorch primer, a guided dissection of DeepSeek-V3’s actual inference code (RMSNorm, RoPE, Multi-Latent Attention, Mixture-of-Experts), and worked fine-tuning examples — full fine-tuning, LoRA, QLoRA, and SFT with TRL.

Part 6 · Practitioner Skills

The day-to-day skills most courses skip — SQL · tree-based models with SHAP · A/B testing · MLOps with FastAPI/Docker/MLflow · RAG with vector search · prompt engineering and LLM eval.

Try it

The fastest path in is:

- Open Module 1 in Colab

- Click File → Save a copy in Drive

- Run cells with

Shift+Enter

If you only want the retrieval part of the AI track without training a model, jump to Modules 30–31 — the RAG and prompt-engineering notebooks stand on their own.